A New Language Model Technology

Google declared a breakthrough technology identified as Quiet that speeds up massive language types (like GPT-3 and LaMDA) devoid of compromising functionality degrees.

Greater Schooling Info Is Better But Comes With a Value

Large Language Designs (LLMs) teach on big amounts of facts.

Instruction the language models on more substantial quantities of facts results in the design studying new capabilities that aren’t usually planned for.

For example, introducing far more training info to a language product can unexpectedly result in it attaining the capability to translate concerning distinctive languages, even even though it wasn’t experienced to do that.

These new talents are termed emergent abilities, talents that are not automatically planned for.

A diverse research paper (PDF) about emergent talents states:

“Although there are dozens of examples of emergent skills, there are at present couple of powerful explanations for why this sort of capabilities arise in the way they do.”

They cannot reveal why diverse skills are realized.

But it is nicely recognized that scaling up the amount of money of data for schooling the equipment enables it to obtain extra capabilities.

The draw back of scaling up the instruction info is that it normally takes much more computational electricity to develop an output, which helps make the AI slower at the time it is building a text output (a second that is called the “inference time”).

So the trade-off with making an AI smarter with far more data is that the AI also gets to be slower at inference time.

Google’s new exploration paper (Confident Adaptive Language Modeling PDF) describes the problem like this:

“Recent advancements in Transformer-dependent large language models (LLMs) have led to sizeable efficiency advancements throughout quite a few responsibilities.

These gains appear with a drastic increase in the models’ measurement, perhaps top to gradual and highly-priced use at inference time.”

Self-confident Adaptive Language Modeling (Serene)

Researchers at Google came upon an appealing resolution for dashing up the language styles though also preserving significant performance.

The resolution, to make an analogy, is fairly like the distinction concerning answering an effortless query and fixing a far more difficult one particular.

An uncomplicated query, like what shade is the sky, can be answered with very little believed.

But a hard answer calls for 1 to halt and imagine a very little much more to find the remedy.

Computationally, significant language styles do not make a distinction concerning a difficult aspect of a textual content era task and an simple component.

They crank out textual content for equally the straightforward and complicated sections using their comprehensive computing energy at inference time.

Google’s option is identified as Confident Adaptive Language Modeling (Relaxed).

What this new framework does is to commit considerably less resources to trivial portions of a textual content era activity and commit the full ability for extra complicated areas.

The investigate paper on Serene states the difficulty and resolution like this:

“Recent advancements in Transformer-based mostly significant language models (LLMs) have led to considerable overall performance enhancements across many jobs.

These gains appear with a drastic increase in the models’ dimensions, perhaps foremost to sluggish and high priced use at inference time.

In apply, nonetheless, the collection of generations designed by LLMs is composed of varying concentrations of issues.

Whilst selected predictions actually profit from the models’ comprehensive capacity, other continuations are extra trivial and can be solved with decreased compute.

…While massive products do greater in standard, the similar sum of computation may possibly not be essential for each individual enter to obtain very similar efficiency (e.g., relying on if the input is simple or difficult).”

What is Google Tranquil and Does it Function?

Relaxed performs by dynamically allocating methods dependent on the complexity of the personal component of the process, using an algorithm to predict whether or not anything needs full or partial methods.

The research paper shares that they analyzed the new program for several all-natural language processing duties (“text summarization, device translation, and query answering”) and uncovered that they have been capable to pace up the inference by about a issue of 3 (300{18875d16fb0f706a77d6d07e16021550e0abfa6771e72d372d5d32476b7d07ec}).

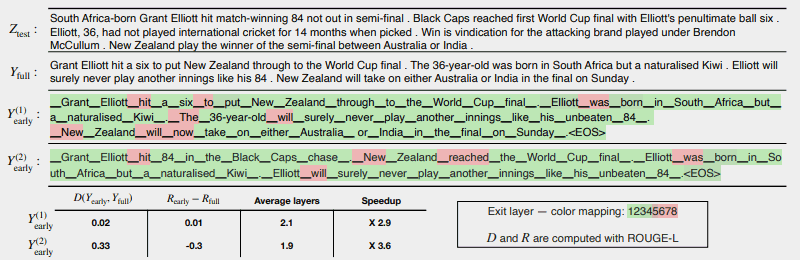

The next illustration displays how effectively the Quiet technique will work.

The number of spots in red indicate exactly where the machine had to use its total capability on that segment of the task.

The areas in environmentally friendly are wherever the device only utilized a lot less than half capacity.

Purple = Whole Capability/Eco-friendly = Considerably less Than Fifty percent Capacity

This is what the investigation paper states about the above illustration:

“CALM accelerates the era by early exiting when doable, and selectively using the comprehensive decoder’s potential only for few tokens, demonstrated in this article on a CNN/DM case in point with softmax-based confidence evaluate. Y (1) early and Y (2) early use distinct assurance thresholds for early exiting.

Bellow (sic) the text, we report the calculated textual and hazard consistency of just about every of the two outputs, together with performance gains.

The hues stand for the quantity of decoding layers used for just about every token—light inexperienced shades show much less than 50 {18875d16fb0f706a77d6d07e16021550e0abfa6771e72d372d5d32476b7d07ec} of the overall levels.

Only a several picked tokens use the entire capacity of the model (colored in purple), while for most tokens the product exits right after just one or several decoding levels (coloured in green).”

The researchers concluded the paper by noting that implementing Quiet involves only minimal modifications in purchase to adapt a large language design to develop into more rapidly.

This investigation is crucial because it opens the doorway to building extra advanced AI models that are educated on considerably larger sized data sets with out encountering slower velocity although maintaining a substantial efficiency degree.

Yet it may be possible that this strategy can also profit massive language designs that are qualified on less facts as effectively.

For case in point, InstructGPT versions, of which ChatGPT is a sibling product, are experienced on somewhere around 1.3 billion parameters but are nonetheless able to outperform designs that are trained on substantially much more parameters.

The scientists mentioned in the conclusion:

“Overall, our entire adaptive compute framework for LMs involves minimum modifications to the fundamental design and allows effectiveness gains whilst fulfilling arduous excellent ensures for the output.”

This information and facts about this study paper was just revealed on Google’s AI website on December 16, 2022. The research paper by itself is dated October 25, 2022.

It will be fascinating to see if this technology will make it way into massive language styles of the around long term.

Study Google’s web site article:

Accelerating Textual content Generation with Self-assured Adaptive Language Modeling (Quiet)

Browse the Investigate Paper:

Confident Adaptive Language Modeling (PDF)

Showcased graphic by Shutterstock/Learn1305